OpenAI has rolled out ChatGPT Images 2.0, its most advanced image-generation model yet, which delivers a striking leap in accuracy—particularly when it comes to rendering readable, realistic text inside images. The update, announced on April 21, 2026, addresses one of the most persistent pain points in AI visuals and signals how quickly multimodal AI is maturing.

For years, generating text within images has been a notorious weakness. OpenAI’s own DALL-E 3, released just two years ago, frequently produced comical errors.

A prompt for a Mexican restaurant menu might yield dishes like “enchuita,” “churiros,” “burrto,” and “margartas,” with prices that made no sense. Images 2.0 changes that. The same request now produces a clean, professional-looking menu with correctly spelled items, realistic prices, and layout that feels ready for print—though the $13.50 ceviche might still raise eyebrows.

The improvement stems from deeper architectural shifts. Earlier diffusion models, which build images by gradually denoising random static, treat letters as just another pixel detail and often fail at small or dense text. OpenAI has not officially confirmed the inner workings of Images 2.0, but industry observers note it appears to lean on autoregressive techniques—similar to how large language models predict the next token—which allow sequential, more precise construction of text elements.

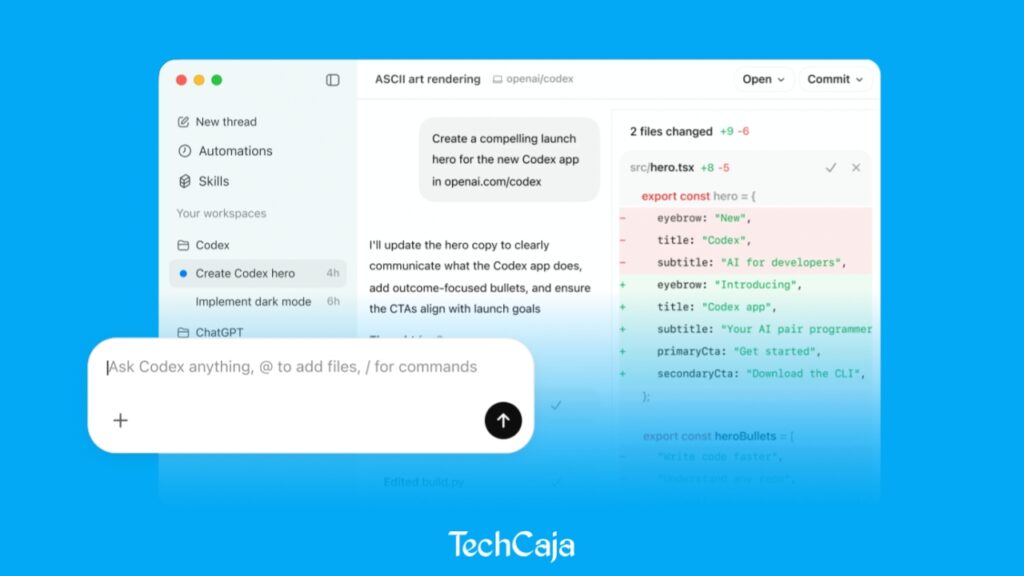

Beyond text, the model gains “thinking capabilities” powered by ChatGPT’s reasoning engine. It can search the web for up-to-date context, generate multiple images from a single prompt, and self-check its own output before delivering the final result. These features unlock practical applications that previous models could only approximate.

Users can now request full marketing asset suites in different sizes and formats, or complete multi-panel comic strips, all from one instruction. The model also shows stronger support for non-Latin scripts, accurately rendering Japanese, Korean, Hindi, and Bengali text—expanding its usefulness in global markets.

OpenAI describes the upgrade in strong terms. In its official release, the company stated: “Images 2.0 brings an unprecedented level of specificity and fidelity to image creation. It can not only conceptualize more sophisticated images, but it actually brings that vision to life effectively, able to follow instructions, preserve requested details, and render the fine-grained elements that often break image models: small text, iconography, UI elements, dense compositions, and subtle stylistic constraints, all at up to 2K resolution.”

The model’s knowledge cutoff sits at December 2025, meaning it draws on information available up to that point when grounding prompts in real-world details. Generation times are noticeably longer than simple text chats—complex scenes or multi-image requests can take several minutes—but the quality justifies the wait for most professional use cases.

Availability is immediate and broad. Starting today, April 22, 2026, ChatGPT Images 2.0 is live for all ChatGPT and Codex users worldwide. Free-tier users gain access to the core model, while paid subscribers receive higher limits, faster generation, and priority access to advanced features. OpenAI will also release a dedicated API version called gpt-image-2 in the coming days, with pricing expected to scale according to image resolution and complexity.

This release comes amid OpenAI’s broader push to make ChatGPT a full creative companion rather than just a conversational tool. Adele Li, product lead for ChatGPT Images, emphasized in recent briefings that visual intelligence is now central to the vision of personalized AI assistants. By combining strong text rendering with reasoning-driven generation, Images 2.0 moves AI visuals closer to production-ready design work.

Analysts say the update could accelerate adoption in marketing, education, publishing, and social media content creation, where accurate typography and branding elements are non-negotiable. While the model is not perfect—occasional stylistic inconsistencies or overly literal interpretations of prompts still appear—it represents the clearest sign yet that AI image generators have crossed a critical threshold.

OpenAI has not detailed future roadmaps beyond the API launch, but the rapid iteration from DALL-E 3 to Images 2.0 suggests continued focus on fidelity, speed, and multilingual support. For now, users can test the new model directly in ChatGPT and begin experimenting with prompts that once seemed impossible.

Source: OpenAI.